Resources.

Featured

content.

Zurich, 16 April 2024 – 95 percent of IT leaders across industries have voiced concerns over the implications of not modernising[…]

Featured

content.

Zürich, 16. April 2024 – 95 Prozent der IT-Führungskräfte sind darüber besorgt, dass Anwendungen und Daten, die auf Mainframe-Systemen laufen,[…]

Filters:

“Risk of Doing Nothing”: 95% of IT Leaders Concerned About Mainframe Modernization Backlog – ISG Survey Reveals

Zurich, 16 April 2024 – 95 percent of IT leaders across industries have voiced concerns over[…]

„Das Risiko, nichts zu tun": 95 % der IT-Führungskräfte besorgt über Modernisierungsstau bei Mainframe-Systemen - ISG-Umfrage veröffentlicht

Zürich, 16. April 2024 – 95 Prozent der IT-Führungskräfte sind darüber besorgt, dass Anwendungen und[…]

"Le risque de ne rien faire" : 95% des responsables informatiques s'inquiètent du retard pris dans la modernisation des mainframes - selon une enquête de l'ISG

Zurich, le 16 avril 2024 – 95 % des responsables informatiques de tous les secteurs[…]

Unlocking the Future with Mainframe Modernisation: An ISG Thought Leadership Paper

Unlocking the Future with Mainframe Modernisation:An ISG Thought Leadership Paper Key Considerations for Modernizing Mainframes[…]

Mainframe migration or investment protection? Go for both!

Why waste significant investments in existing applications? Captured in proprietary applications, a company’s existing business[…]

ISG Sweet Spot Report: LzLabs

Emphasising incremental modernisation and cost reduction, LzLabs supports both legacy and modern programming languages while ensuring seamless cloud integration.

Step by Step to Success: Mainframe Migration to the AWS Cloud

Did you know that a significant number of businesses today still rely on legacy mainframe[…]

Security: A Focus for the Mainframe Migration Path

Mainframe migration to modern Linux environments presents a unique set of challenges and opportunities, especially[…]

Navigating Modernisation Challenges: Incremental, Low-Risk Approaches to Success

Rather than opting for drastic transformations, businesses increasingly embrace a more incremental and low-risk approach[…]

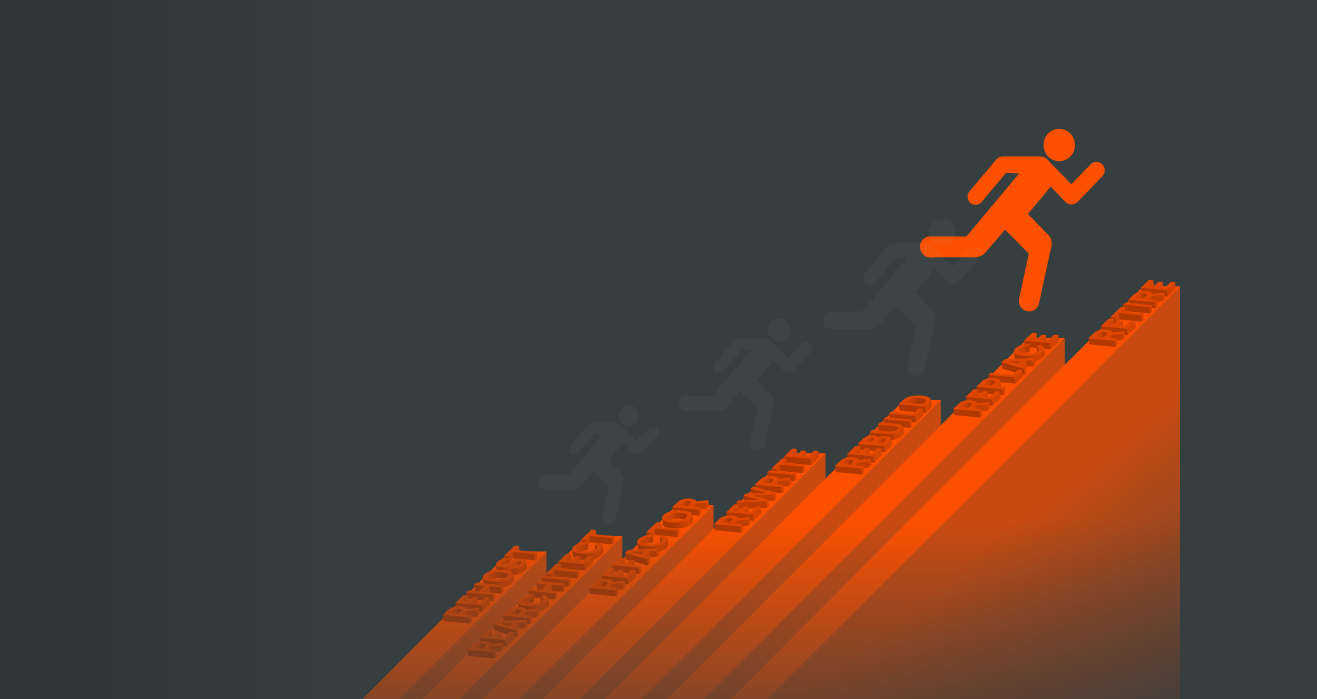

How to Apply the Seven Rs of Modernisation: FAQ

How can legacy IT modernisation be less of a challenge and more of an opportunity?[…]

Amazon Web Services (AWS) Certifies LzLabs Software Defined Mainframe®

Wallisellen, 09 January 2024 – LzLabs today announced that it has successfully completed the Amazon[…]

Amazon Web Services (AWS) zertifiziert den LzLabs Software Defined Mainframe®

Wallisellen, 09. Januar 2024 – LzLabs gibt heute bekannt, dass das Unternehmen den Amazon Web[…]

Consult our experts.

Are you interested in discussing our solution and learning how we can help you reach your enterprise goals? LzLabs has legacy experts, thought leaders, and modern system architects ready to assist you.