At the other extreme of computing history, fifty years later, Java, invented at Sun Microsystems in 1995 by James Gosling, is clearly the current leading programming language in the corporate world. It delivers the very powerful abstractions of object-oriented programming, backed by the largest tooling ever developed for any language and fostered by an immense cornucopia of open-source packages providing useful “boilerplate code” or functional packages and consequently greatly reducing the efforts to develop an application. Additionally, Java is supported by a wide community of developers, much wider than the COBOL experts, among the currently disappearing IT species. Nonetheless, COBOL is still massively present in the corporate world: published numbers state than over 200 billion lines of such code are still productive, mostly on mainframes running legacy workloads.

So, these two worlds coexist in the enterprise. Mostly in isolation of one another, though! And this is a pity because it means lots of duplication of effort on both sides and big problems when those two worlds must communicate and exchange data.

Leverage legacy applications as a valuable asset

The LzLabs Software Defined Mainframe® (SDM) proposes a straight-forward path to fix this issue and leverage legacy applications as a valuable asset rather than carrying them over as a massive burden. This is based on solid rationales:

- As stated above, the COBOL estate is still massive, and it cannot just be forgotten in the dark background of shinier modern IT systems. It should rather be leveraged.

- It represents a massive investment for each of our corporate customers: in a given bank, the core banking system, i.e., the roots of the IT system, comprises usually between 10 and 15 million lines of COBOL. Estimates of the cost for those fully-baked lines of code vary widely but a range of $10 to $20 per line doesn’t seem unreasonable. So, this means that such a core banking system is worth $200M to $300M in corresponding internal men-years of work to engrave in rock-solid software the core rules of the banking system. The total investment represented by the 200 billion lines mentioned above amounts to trillions of dollars: something that is hard to reject, forget or ignore in tough economic times where competition is harder than ever!

- This legacy software “does the job”: it is frequent in our projects that we encounter programs that have not been recompiled in the last 15 years. This clearly means that they were well designed to satisfy business needs over a long period of time. What would be the purpose of rewriting those programs now, having worked satisfactorily over a long period, and which are still satisfying the business needs of today?

- Legacy software most often represents the “system of record”, as named by Gartner. As such, it is a set of programs that are needed to keep track of the numbers, and which guarantees the coherency of the business by making it transparent. Similarly, it ensures compliance with regulations. But, it doesn’t represent the “system of differentiation” nor the “system of innovation” (again, as per Gartner’s taxonomy), i.e., the place where you can outpace your competition to become a market leader!

These systems of differentiation and innovation are instead implemented through the new business applications that depend upon the system of record and that sit on top of it. So, the solution proposed by SDM will provide a smooth interoperability between the stable COBOL legacy and the newly Java-written innovative business applications.

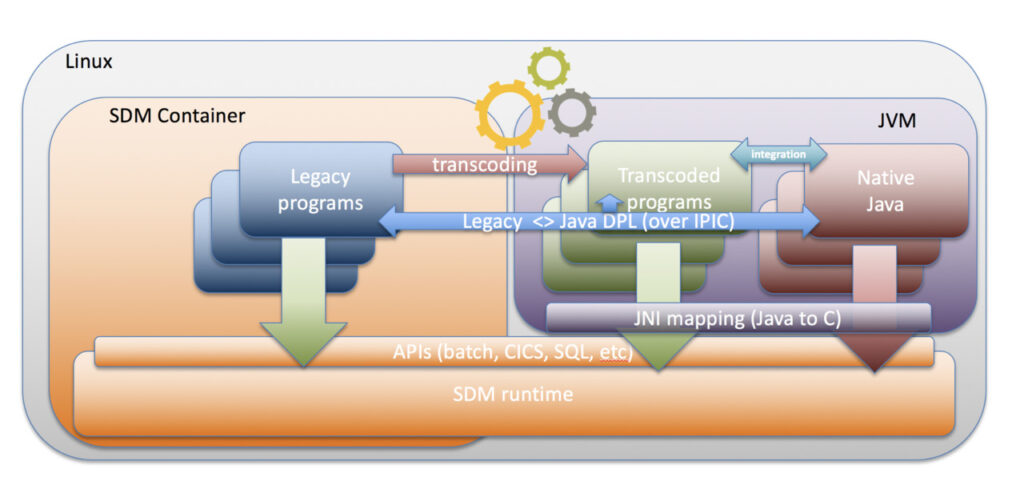

As depicted in the picture above (with Cobol to Java transcoding detailed in a subsequent article), SDM offers this interoperability through APIs, which wrap its runtime functions in Java form and take care of data marshalling (data conversion between mainframe and Java encodings, format adaptation between mainframe and Java types, etc.). Important design efforts were made to keep this interface as unobtrusive as possible: it’s not about a massive framework imposing very stringent rules to obtain its benefits, but rather a minimal library to be included in the development and runtime environment, which calls the SDM interfaces.

For example…

For example, your newly written Java application needs to call a CICS® program named XYZ. XYZ produces its result in a transactional TS queue named QUEUE123. Both calling the program and reading the queue items are quite a challenge in a standard mainframe environment. But as soon as your legacy application has been rehosted, it becomes a breeze: the new Java class has to call some pseudo-code like SDM.link(“XYZ”,commarea_parameters) where commarea_parameters are the parameters expected by XYZ to deliver its results and after SDM.link() returns, i.e., after XYZ executes, a subsequent SDM.readqTS(“QUEUE123”,item_nbr) will read the item of designated index in the queue residing in the runtime of our LzOnline® (functionally replacing the mainframe transactional monitor)

In this example, the interface library and SDM itself will take care of all the heavy-lifting: data will be marshalled from Java to COBOL format, a transaction will be triggered and executed in LzOnline to run XYZ. Then, the second call will access the SDM runtime, shared between Java and the legacy application to navigate all its entities up to QUEUE123 to retrieve the item expected by the Java application. On the way back, the interface library will marshal data in the reverse direction, from COBOL to Java format.

Interconnection with the legacy world

All this activity is happening smoothly and “behind the scenes” for the Java programmers: their experience related to interconnection with the legacy world becomes smooth and encourages them to do the maximum they can with those valuable assets. It avoids them trying to “ignore the past” and, mistakenly trying to work around the rules of the system of record.

Additionally, SDM offers its services in the other direction as well: COBOL programs rehosted on our system can call Java classes as easily as they can other COBOL programs. This corresponds to a valuable use case: the “cold zones” (i.e., programs not changed nor recompiled over many years) described above are side-by-side with “hot zones”. The “hot zones” are program sets that change very often. For example, in the banking industry, hot zones are usually those programs dealing with compliance to financial regulations, which changes at a rather increasing pace nowadays. So, it makes a lot of sense to shift those programs to Java in order to leverage the much higher abstraction power of the object-oriented paradigm for higher productivity with such permanent changes.

Leveraging services that still “do the job”

All in all, the SDM approach to modernization in the development process is clearly gradual: it is not only about “doing things right”, i.e., shifting to Java to leverage the benefits described in the introduction, but also and rather about “doing the right things”, i.e., focusing modernization only on the business functions that matter most by leveraging the services that still “do the job” in their original mainframe form.

LzLabs’ objectives are not only “tactical”, i.e., allowing massive savings up to 70% in most favorable situation when customers rehost of their entire mainframe workload onto the SDM. Our goals are also very much “strategic”: we want to give our customers the widest set of application modernization options possible to allow them to execute their gradual business transformation toward a fully digital arena in the most successful manner!

Even though it allows customers to take advantage of the incredibly efficient economics of x86/Linux associated to cloud and Open Source software, SDM is much more than a direct rehosting solution generating massive savings, it is rather a technology platform which enables new forms of business, where legacy is a valued and leveraged asset, rather than a burden.